Insurance

Case Studies

Automated Data Ingestion and Transformation

Framework for Insurance Analytics

About the Client

A leading insurance organization operating across multiple business domains required a scalable data platform to manage and analyze large volumes of operational data. The organization maintained separate systems for Claims, CRM, HR, Policy Administration (PAS), and Sales Force Management (SFM).

The objective was to integrate these disparate datasets into a unified architecture and transform raw data into a reliable, analytics-ready format for business reporting and decision-making.

Challenges

- The client operated across multiple business domains such as Claims, CRM, HR, PAS, and SFM, with data distributed across disparate systems, making it difficult to establish a unified and consistent view for analytics.

- Data originated from multiple sources with varying formats, schemas, and naming conventions, leading to significant challenges in standardization, integration, and ensuring data quality for reliable reporting.

- Establishing secure and stable connectivity between on-premises SQL Server and Microsoft Fabric required proper configuration of the On-Premises Data Gateway and careful handling of data movement.

- Designing a scalable ingestion framework capable of dynamically processing multiple tables across domains using metadata-driven approaches introduced complexity in pipeline design.

- Handling schema variability, inconsistent data types, and missing or null values across datasets required robust data validation and transformation logic to maintain data integrity.

- Ensuring reliable, repeatable, and orchestrated data pipelines with proper dependency management was critical to avoid duplication and guarantee consistent data processing across all layers.

Solutions

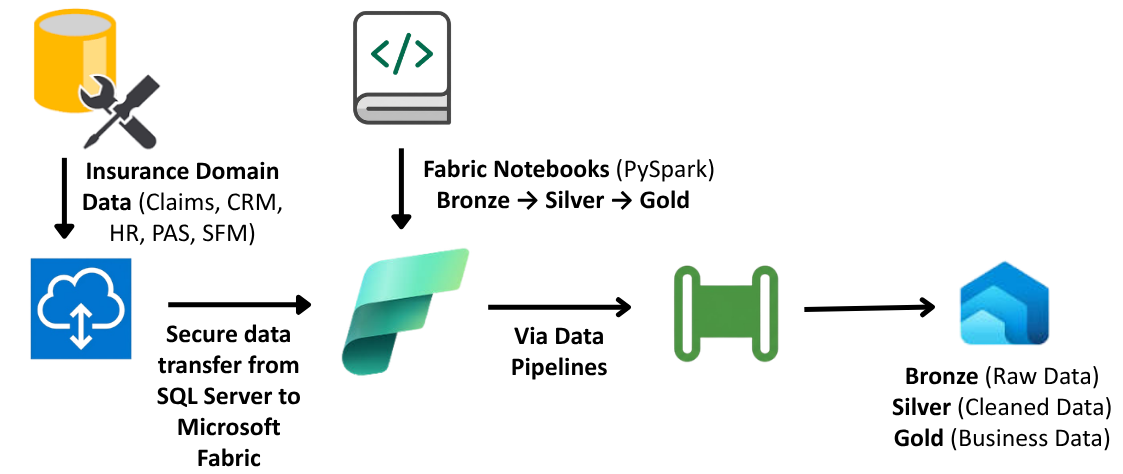

- A scalable and automated data pipeline was implemented using Microsoft Fabric, leveraging the Medallion Architecture to enable structured data processing across Bronze, Silver, and Gold layers.

- Data was securely ingested from on-premises SQL Server into the Fabric Lakehouse using an On-Premises Data Gateway, ensuring reliable and consistent connectivity between on-prem and cloud environments.

- A metadata-driven ingestion framework was developed using Fabric Data Pipelines, utilizing Lookup and ForEach activities to dynamically process multiple tables across business domains, enabling scalable and efficient data ingestion.

- Data transformation and standardization were performed using Fabric Notebooks (PySpark), including handling missing values, deduplication, schema alignment, and implementation of business logic to generate analytics-ready datasets.

- The end-to-end pipeline was fully orchestrated to ensure automated execution, dependency management, and consistent data processing, delivering a reliable and scalable data platform.

Architecture

Impact of the Solution

- Established a unified data platform by integrating multiple business domains into a centralized Lakehouse, enabling seamless cross-functional analysis and reporting.

- Improved data quality and consistency through standardized data processing, validation, and cleansing, resulting in more reliable and accurate analytics.

- Automated end-to-end data pipelines, eliminating manual intervention and significantly improving operational efficiency and data processing reliability.

- Implemented a scalable and flexible architecture capable of handling increasing data volumes while maintaining performance and ease of maintenance.

- Enabled faster data availability and improved accessibility to analytics-ready datasets, supporting quicker and more informed business decision-making.

Technologies Used

- Microsoft Fabric

- Fabric Data Pipeline

- Fabric Lakehouse

- Fabric Notebooks (PySpark)

- SQL Server (SSMS)

- On-Premises Data Gateway